Millions of Americans are turning to AI chatbots for health answers, with an increasing number of doctors also integrating them into their practice, reports BritPanorama.

Medical chatbots have emerged as a prominent resource for healthcare professionals, helping them stay updated in an ever-evolving medical landscape. One CEO claimed that over 100 million Americans were treated by doctors utilizing their platform last year.

However, not all popular chatbots meet the stringent standards required by healthcare providers. For instance, OpenAI’s ChatGPT has been criticized for inaccuracies and failing to reflect the latest medical guidance. Its usage policies prohibit using its services for tailored medical advice without the consultation of a licensed health professional.

Dr. Ida Sim from the University of California, San Francisco, noted that while ChatGPT serves as a general tool, specialized medical chatbots are superior for referencing clinical guidelines, leading to a substantial uptake among practitioners.

The most common use case

With millions of research papers published annually, staying abreast of developments is a daunting task for physicians. Dr. Jared Dashevsky, a resident physician, emphasized the impossibility of staying current without dedicating excessive time, which many simply do not have.

Consequently, many doctors have begun utilizing chatbots as reference tools to maintain their medical licenses. These chatbots effectively sift through medical literature, providing accurate summaries and references to vital studies, as confirmed by Dr. Jonathan H. Chen from Stanford Medicine.

This workflow enhances the quality of information available to doctors, as Dashevsky explained, particularly benefiting trainees who often work long hours.

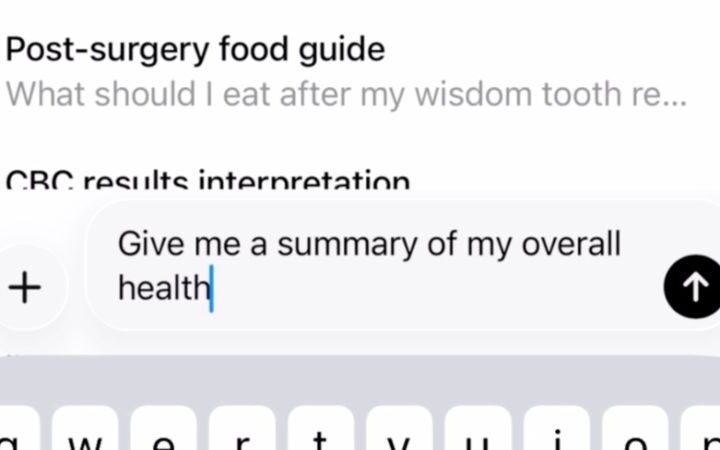

Uploading patient records to AI bots

Some healthcare systems have embraced AI chatbots to enhance patient care, citing promises of safety and privacy. Nonetheless, many doctors resort to unauthorized chatbots, known as shadow AIs, which may mislead regarding compliance with privacy regulations.

The Health Insurance Portability and Accountability Act (HIPAA) mandates confidentiality for health information, but some shadow AIs have led practitioners to wrongly assume it is safe to input protected data for tailored responses. Iliana Peters, a healthcare attorney, cautioned that ‘HIPAA compliance’ is often misrepresented in commercial contexts.

Concerns about data commodification have arisen, as Dr. Carolyn Kaufman noted that sensitive patient information is increasingly found in unauthorized platforms. Kaufman warned that indiscriminately uploading data poses risks to both individual patients and healthcare institutions.

Drafting AI-generated notes

AI chatbots are also aiding doctors in drafting summaries of patient visits and lengthy hospital stays. These digitally accessible notes facilitate continuity of care within medical teams. Dashevsky remarked on the advantages of having AI review patient histories compared to the human approach that can overlook significant details.

Writing letters to insurance companies

Administrative tasks consume an average of nine hours weekly for doctors, creating a significant burden that costs the healthcare system approximately $26.7 billion annually. AI-generated letters for prior authorizations and correspondence with insurance companies have been highlighted as a major efficiency gain.

Dashevsky described how AI-generated letters have streamlined processes, significantly reducing the time commitment previously needed for this administrative work.

Creating a list of possible diagnoses

When consulting with patients, physicians are tasked with diagnosing a variety of potential conditions. Both medical students and practicing doctors are leveraging AI chatbots to assist in generating comprehensive differential diagnoses.

As Evan Patel, a medical student, remarked, AI tools can significantly orient students encountering new conditions, while Kaufman added that the most precise diagnostic suggestions arise from comprehensive patient data input.

What patients need to know

All eight medical professionals interviewed acknowledged their frequent use of AI chatbots, generally viewing these technologies as beneficial for reducing cognitive and administrative loads. Nonetheless, they expressed valid concerns regarding patient privacy.

Despite advancements, Kaufman reminded that errors are inherent in AI technologies, which can produce inaccurate information. Dr. Chen underscored that patients often misunderstand AI capabilities, noting variability in responses depending on how questions are framed.

Sim highlighted that while AI impacts medical workflows and knowledge acquisition, it cannot replicate the essential element of human experience in healthcare, which is critical to patient-centered care.

Ultimately, understanding the dynamics between AI and patient care remains essential as advancements unfold.